-

US anti-disinformation guardrails fall in Trump's first 100 days

US anti-disinformation guardrails fall in Trump's first 100 days

-

Dick Barnett, two-time NBA champ with Knicks, dies at 88

-

PSG hope to have Dembele firing for Arsenal Champions League showdown

PSG hope to have Dembele firing for Arsenal Champions League showdown

-

Arteta faces Champions League showdown with mentor Luis Enrique

-

Niemann wins LIV Mexico City to secure US Open berth

Niemann wins LIV Mexico City to secure US Open berth

-

Slot plots more Liverpool glory after Premier League triumph

-

Novak and Griffin win PGA pairs event for first tour titles

Novak and Griffin win PGA pairs event for first tour titles

-

Inter Miami unbeaten MLS run ends after Dallas comeback

-

T'Wolves rally late to beat Lakers, Knicks edge Pistons amid controversy

T'Wolves rally late to beat Lakers, Knicks edge Pistons amid controversy

-

Japan's Saigo wins playoff for LPGA Chevron title and first major win

-

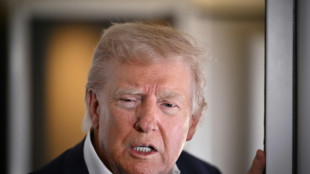

Trump tells Putin to 'stop shooting' and make a deal

Trump tells Putin to 'stop shooting' and make a deal

-

US says it struck 800 targets in Yemen, killed 100s of Huthis since March 15

-

Conflicts spur 'unprecedented' rise in military spending

Conflicts spur 'unprecedented' rise in military spending

-

Gouiri hat-trick guides Marseille back to second in Ligue 1

-

Racing 92 thump Stade Francais to push rivals closer to relegation

Racing 92 thump Stade Francais to push rivals closer to relegation

-

Inter downed by Roma, McTominay fires Napoli to top of Serie A

-

Usyk's unification bout against Dubois confirmed for July 19

Usyk's unification bout against Dubois confirmed for July 19

-

Knicks edge Pistons for 3-1 NBA playoff series lead

-

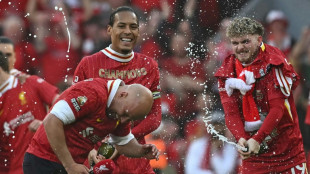

Slot praises Klopp after Liverpool seal Premier League title

Slot praises Klopp after Liverpool seal Premier League title

-

FA Cup glory won't salvage Man City's troubled season: Guardiola

-

Bumrah, Krunal Pandya star as Mumbai and Bengaluru win in IPL

Bumrah, Krunal Pandya star as Mumbai and Bengaluru win in IPL

-

Amorim says 'everything can change' as Liverpool equal Man Utd title record

-

Iran's Khamenei orders probe into port blast that killed 40

Iran's Khamenei orders probe into port blast that killed 40

-

Salah revels in Liverpool's 'way better' title party

-

Arsenal stun Lyon to reach Women's Champions League final

Arsenal stun Lyon to reach Women's Champions League final

-

Slot 'incredibly proud' as Liverpool celebrate record-equalling title

-

Israel strikes south Beirut, prompting Lebanese appeal to ceasefire guarantors

Israel strikes south Beirut, prompting Lebanese appeal to ceasefire guarantors

-

Smart Slot reaps rewards of quiet revolution at Liverpool

-

Krunal Pandya leads Bengaluru to top of IPL table

Krunal Pandya leads Bengaluru to top of IPL table

-

Can Trump-Zelensky Vatican talks bring Ukraine peace?

-

Van Dijk hails Liverpool's 'special' title triumph

Van Dijk hails Liverpool's 'special' title triumph

-

Five games that won Liverpool the Premier League

-

'Sinners' tops N.America box office for second week

'Sinners' tops N.America box office for second week

-

Imperious Liverpool smash Tottenham to win Premier League title

-

Man City sink Forest to reach third successive FA Cup final

Man City sink Forest to reach third successive FA Cup final

-

Toll from Iran port blast hits 40 as fire blazes

-

Canada car attack suspect had mental health issues, 11 dead

Canada car attack suspect had mental health issues, 11 dead

-

Crowds flock to tomb of Pope Francis, as eyes turn to conclave

-

Inter downed by Roma, AC Milan bounce back with victory in Venice

Inter downed by Roma, AC Milan bounce back with victory in Venice

-

Religious hate has no place in France, says Macron after Muslim killed in mosque

-

Last day of Canada election campaign jolted by Vancouver attack

Last day of Canada election campaign jolted by Vancouver attack

-

Barcelona crush Chelsea to reach women's Champions League final

-

Nine killed as driver plows into Filipino festival in Canada

Nine killed as driver plows into Filipino festival in Canada

-

Germany marks liberation of Bergen-Belsen Nazi camp

-

Hojlund strikes at the death to rescue Man Utd in Bournemouth draw

Hojlund strikes at the death to rescue Man Utd in Bournemouth draw

-

Zelensky says Ukraine not kicked out of Russia's Kursk

-

Zverev, Sabalenka battle through in Madrid Open, Rublev defence over

Zverev, Sabalenka battle through in Madrid Open, Rublev defence over

-

Ruthless Pogacar wins Liege-Bastogne-Liege for third time

-

Bumrah claims 4-22 as Mumbai register five straight IPL wins

Bumrah claims 4-22 as Mumbai register five straight IPL wins

-

No place for racism, hate in France, says Macron after Muslim killed in mosque

Is AI's meteoric rise beginning to slow?

A quietly growing belief in Silicon Valley could have immense implications: the breakthroughs from large AI models -– the ones expected to bring human-level artificial intelligence in the near future –- may be slowing down.

Since the frenzied launch of ChatGPT two years ago, AI believers have maintained that improvements in generative AI would accelerate exponentially as tech giants kept adding fuel to the fire in the form of data for training and computing muscle.

The reasoning was that delivering on the technology's promise was simply a matter of resources –- pour in enough computing power and data, and artificial general intelligence (AGI) would emerge, capable of matching or exceeding human-level performance.

Progress was advancing at such a rapid pace that leading industry figures, including Elon Musk, called for a moratorium on AI research.

Yet the major tech companies, including Musk's own, pressed forward, spending tens of billions of dollars to avoid falling behind.

OpenAI, ChatGPT's Microsoft-backed creator, recently raised $6.6 billion to fund further advances.

xAI, Musk's AI company, is in the process of raising $6 billion, according to CNBC, to buy 100,000 Nvidia chips, the cutting-edge electronic components that power the big models.

However, there appears to be problems on the road to AGI.

Industry insiders are beginning to acknowledge that large language models (LLMs) aren't scaling endlessly higher at breakneck speed when pumped with more power and data.

Despite the massive investments, performance improvements are showing signs of plateauing.

"Sky-high valuations of companies like OpenAI and Microsoft are largely based on the notion that LLMs will, with continued scaling, become artificial general intelligence," said AI expert and frequent critic Gary Marcus. "As I have always warned, that's just a fantasy."

- 'No wall' -

One fundamental challenge is the finite amount of language-based data available for AI training.

According to Scott Stevenson, CEO of AI legal tasks firm Spellbook, who works with OpenAI and other providers, relying on language data alone for scaling is destined to hit a wall.

"Some of the labs out there were way too focused on just feeding in more language, thinking it's just going to keep getting smarter," Stevenson explained.

Sasha Luccioni, researcher and AI lead at startup Hugging Face, argues a stall in progress was predictable given companies' focus on size rather than purpose in model development.

"The pursuit of AGI has always been unrealistic, and the 'bigger is better' approach to AI was bound to hit a limit eventually -- and I think this is what we're seeing here," she told AFP.

The AI industry contests these interpretations, maintaining that progress toward human-level AI is unpredictable.

"There is no wall," OpenAI CEO Sam Altman posted Thursday on X, without elaboration.

Anthropic's CEO Dario Amodei, whose company develops the Claude chatbot in partnership with Amazon, remains bullish: "If you just eyeball the rate at which these capabilities are increasing, it does make you think that we'll get there by 2026 or 2027."

- Time to think -

Nevertheless, OpenAI has delayed the release of the awaited successor to GPT-4, the model that powers ChatGPT, because its increase in capability is below expectations, according to sources quoted by The Information.

Now, the company is focusing on using its existing capabilities more efficiently.

This shift in strategy is reflected in their recent o1 model, designed to provide more accurate answers through improved reasoning rather than increased training data.

Stevenson said an OpenAI shift to teaching its model to "spend more time thinking rather than responding" has led to "radical improvements".

He likened the AI advent to the discovery of fire. Rather than tossing on more fuel in the form of data and computer power, it is time to harness the breakthrough for specific tasks.

Stanford University professor Walter De Brouwer likens advanced LLMs to students transitioning from high school to university: "The AI baby was a chatbot which did a lot of improv'" and was prone to mistakes, he noted.

"The homo sapiens approach of thinking before leaping is coming," he added.

P.Mathewson--AMWN